Welcome to my corner of the internet where I share my adventures in tech tinkering, homelab experiments, maker projects, and cycling escapades. Whether I’m setting up servers in my home lab, building something cool, or hitting the trails on two wheels, I’ll try to document the journey. Stick around if you’re into any of these things – I’m always excited to share what I learn along the way!

Fully Automated Kubernetes Cluster: Proxmox, Talos, OpenTofu and Forgejo

I’ve been running a homelab Kubernetes cluster for a while now, and kept meaning to write up how it’s all wired together. This is an overview of what will become a series of posts. I’ll be looking to cover each area of the setup, building it up as we go, including code.

The main tools I’ve used in this build are Proxmox as a hypervisor running the VMs that make up the Kubernetes cluster. Talos Linux, the immutable Linux distro I’ve favoured to build the cluster. ArgoCD, to add the GitOps approach to deploying apps onto my cluster. OpenTofu to deploy parts of the setup and Forgejo to host the Git repos, Container images and run workflows as part of the deployment.

By Jon Archer

Continue reading...Cycling to Carnforth via the Trough of Bowland

By Jon Archer

Continue reading...2024 Cycling Recap

By Jon Archer

Continue reading...Cycling the Old Railway Lines: Bury to Rawtenstall

By Jon Archer

Continue reading...Cycling Bolton Abbey to Burnsall: An Impromptu Adventure in the Yorkshire Dales

By Jon Archer

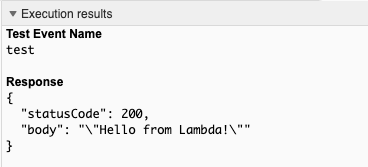

Continue reading...Terraforming Serverless

By Jon Archer

Continue reading...Blogging With Best Intentions

By Jon Archer

Continue reading...GoingOffGrid Pt2

By Jon Archer

Continue reading...GoingOffGrid

By Jon Archer

Continue reading...